Google Engineering Director Outlines Agentic Engine Optimization as the New Frontier for Content Strategy in the Age of AI

Addy Osmani, a Director of Engineering at Google Cloud AI, has recently introduced a framework for a new discipline termed Agentic Engine Optimization (AEO). This framework serves as a strategic model designed to make digital content more accessible and usable for autonomous AI agents, a shift that marks a significant departure from traditional Search Engine Optimization (SEO). While SEO has historically focused on ranking content for human consumption via search engines, AEO addresses the emerging reality of a web populated by agents—software entities capable of fetching, parsing, and acting upon information without direct human intervention. This development signals a fundamental transformation in how information is structured, distributed, and monetized across the global digital ecosystem.

The Shift from Human Browsing to Agentic Extraction

The core premise of Osmani’s guidance is that AI agents are fundamentally changing the mechanics of web interaction. In a traditional search model, a user enters a query, views a list of results, and clicks through to various websites, spending time on pages to find specific answers. This human-centric model relies on visual rendering, user interface design, and "dwell time." However, AI agents operate through a process of compression. Rather than navigating through multiple pages, an agent may crawl a site, extract relevant data points, and synthesize a single interaction or output for the user.

In this agentic model, traditional metrics such as click-through rates (CTR) and time on page become less relevant. Instead, the primary objective of a content creator shifts toward ensuring that an agent can successfully parse and utilize the information. If an agent cannot easily find the specific data it needs due to poor structure or excessive "fluff," it may skip the content entirely, leading to the exclusion of that source from the agent’s final response or action.

The Token Economy and the Problem of Context Windows

A central pillar of Osmani’s AEO framework is the management of tokens. In the world of Large Language Models (LLMs), a token is the basic unit of text that the model processes. Every AI model has a fixed "context window," which is the maximum number of tokens it can process in a single interaction. For example, while models like GPT-4 or Gemini have significantly expanded their context windows—ranging from 128,000 to over 1 million tokens—the processing power required to analyze massive amounts of text remains a bottleneck.

Osmani points out that when a webpage exceeds an agent’s immediate processing window, parts of the content may be ignored. This leads to what researchers call the "lost in the middle" phenomenon, where AI models are highly effective at retrieving information from the very beginning or very end of a text but struggle to retain details buried in the middle. Consequently, token count is becoming a core optimization factor. If a page is too long or contains too much irrelevant "filler" content, the agent may fail to capture the most critical information, leading to distorted or incomplete outputs.

To combat this, Osmani recommends a radical restructuring of content. This involves adopting an "inverted pyramid" style of writing, where the most crucial information—the "who, what, where, when, and why"—is placed at the very top of the document. This ensures that even if an agent only processes the first few thousand tokens, it captures the essential value of the page.

Technical Evolution: Markdown and the /ai Directory

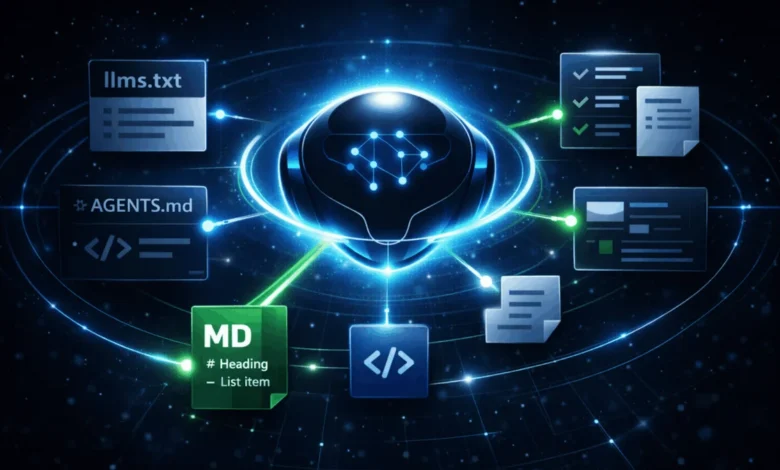

The AEO framework suggests that the technical format of content is just as important as its structure. While HTML is the standard for human-readable web pages, it is often cluttered with scripts, styling tags, and tracking pixels that create "noise" for AI agents. Osmani advocates for the provision of clean Markdown versions of content alongside traditional HTML pages. Markdown is a lightweight markup language that is significantly easier for LLMs to parse, as it strips away the visual layout and focuses strictly on the hierarchy and substance of the text.

Furthermore, the AEO model identifies emerging patterns for content discovery. One such proposal is the use of an llms.txt file, located in the root directory of a website. Similar to how robots.txt provides instructions to search engine crawlers, llms.txt would serve as a roadmap for AI agents, providing a concise, machine-readable summary of the site’s most important information and direct links to high-priority content. Osmani also noted the potential utility of an /ai directory, a dedicated space where developers can host agent-optimized versions of their data, free from the distractions of the standard consumer-facing web experience.

Chronology of the Search Evolution

The emergence of AEO is the latest step in a decades-long evolution of how humans and machines interact with information. To understand the significance of Osmani’s proposal, it is necessary to view it through a chronological lens:

- The Directory Era (1990s): Early search engines like Yahoo! relied on manual human categorization. SEO was virtually non-existent, and the web was a static collection of pages.

- The Algorithmic Era (2000s): Google’s PageRank revolutionized the web by using links as a signal of authority. SEO became a discipline focused on keywords, backlinks, and meta-tags.

- The Semantic and Mobile Era (2010s): The introduction of the Knowledge Graph and mobile-first indexing forced creators to focus on intent and user experience. Schema markup became a tool for helping engines "understand" the relationship between data points.

- The Generative AI Era (2023–Present): The launch of ChatGPT and Google’s AI Overviews (formerly SGE) shifted the focus from lists of links to synthesized answers. This period saw the rise of Answer Engine Optimization.

- The Agentic Era (2025 and Beyond): As proposed by Osmani, the next phase involves agents that do not just answer questions but perform tasks. This requires content that is not just readable, but "executable" by autonomous systems.

Supporting Data: The Cost of Inefficiency

The necessity for AEO is driven by the economics of AI computation. According to industry data, the cost of processing a single token has decreased significantly over the last 18 months, yet the volume of data being processed has exploded. For a company like Google or OpenAI, running an agent that browses the live web is exponentially more expensive than a traditional search crawl.

Research into LLM performance has shown that when an agent is forced to parse a 10,000-word article to find a 50-word answer, the likelihood of an "hallucination"—where the AI generates false information—increases by nearly 20% compared to when it is provided with a concise, 500-word summary. By optimizing for token efficiency, publishers are not just helping agents; they are ensuring the accuracy of the information the AI provides to the end-user.

Conflicting Perspectives within Google

One of the most notable aspects of Osmani’s guidance is the tension it creates with established SEO practices. While Osmani, representing Google Cloud AI, advocates for separate Markdown pages and llms.txt files, other divisions within Google have expressed skepticism.

John Mueller, a Senior Search Analyst at Google Search, has publicly recommended against creating separate Markdown pages for LLMs. Mueller’s stance is rooted in the principle of "one URL per piece of content," suggesting that maintaining multiple versions of a page could lead to indexing confusion and duplicate content issues within traditional Google Search. Furthermore, Google Search representatives have stated that the search engine does not currently use the llms.txt file for ranking or crawling purposes, relying instead on its standard web-crawling infrastructure.

This internal divergence highlights a critical crossroads for the industry. Google Cloud AI is focused on the infrastructure of the future—enabling developers to build agents—while Google Search remains focused on the current advertising-supported model of the human web. For publishers, this creates a dilemma: should they optimize for the human-centric search engines that currently drive their revenue, or the AI agents that are poised to dominate future traffic?

Broader Implications for the Digital Economy

The adoption of AEO has profound implications for the future of the internet. If agents become the primary way people interact with the web, the "attention economy" could collapse. In a world where an agent extracts a recipe or a price comparison without the user ever seeing an advertisement or a "Subscribe to our Newsletter" pop-up, the traditional monetization models of the web will need to be rebuilt.

There is also the risk of a "Two-Tiered Web." Larger corporations with the technical resources to implement AEO—maintaining clean Markdown repositories, structured data, and dedicated AI directories—may see their content prioritized by agents. Smaller publishers who lack the technical expertise to optimize for both humans and machines may find themselves invisible to the next generation of digital assistants.

Analysis of the Agentic Future

The introduction of Agentic Engine Optimization by a high-ranking Google engineer is a clear signal that the era of "passive" content is ending. The web is becoming an active environment where data must be formatted for utility rather than just aesthetics.

AEO is not merely a set of technical tweaks; it is a philosophical shift. It requires content creators to view their websites not as destinations, but as databases. The success of a piece of content will no longer be measured by how many people looked at it, but by how effectively it was utilized by an agent to complete a task. As AI agents move from experimental tools to ubiquitous personal assistants, the principles of AEO—conciseness, structure, and machine-readability—will likely become the standard for any organization wishing to remain relevant in the digital age.

While the conflict between traditional SEO and the new AEO framework remains unresolved, the trend is clear: the future belongs to those who can speak the language of the machines as fluently as they speak to their human audience. For now, the best path for publishers appears to be a hybrid approach—maintaining high-quality HTML for human readers while beginning the process of structural simplification that will allow AI agents to navigate the web with ease.