Machine-First Architecture: AI Agents Are Here, Reshaping the Web, and Most Websites Are Unprepared

The landscape of the internet is undergoing a profound and rapid transformation, driven by the integration of Artificial Intelligence (AI) agents directly into the browsers used by billions worldwide. This is no longer a theoretical concept or a future demo; it is a current reality, with every major technology company actively launching either AI-embedded browsers or extensions designed to act autonomously on behalf of users. This paradigm shift, characterized by AI agents performing complex, multi-step operations, is challenging the fundamental assumptions upon which websites have been built for decades, leaving the vast majority structurally unprepared for the emerging "agentic web."

The Dawn of Agentic Browsing: A Chronology of Integration

The journey towards agentic AI began with the popularization of large language models (LLMs) in late 2022, notably with the public release of ChatGPT. Initially, users engaged with AI by posing questions, much like the early days of search engines 25 years ago. This interaction was primarily conversational, with AI serving as an advanced information retriever and content generator. However, the last six to nine months have witnessed an accelerated evolution, pushing AI beyond mere analysis and summarization into active task execution within the browser environment.

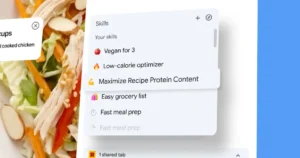

Major players in the tech industry have quickly moved to capitalize on this capability. Anthropic, a prominent AI research company, launched "Claude for Chrome," an extension designed to navigate complex websites, accurately fill out forms, and execute multi-step operations on a user’s behalf. Simultaneously, Google announced "Gemini in Chrome with agentic browsing capabilities," featuring an "auto browse" function that can autonomously interact with webpages to fulfill user requests. Beyond proprietary solutions, the open-source community is also contributing to this evolution with projects like "OpenClaw," an AI agent that directly connects LLMs to browsers, messaging applications, and system tools to perform tasks with remarkable autonomy. These developments mark a critical juncture, transitioning AI from a passive assistant to an active participant in online interactions.

A Structural Crisis: Why Websites Are Lagging

The rapid deployment of these agentic capabilities has exposed a significant vulnerability in the current web ecosystem. Slobodan Manic, a respected voice at the intersection of technical web performance and AI, and author of a five-part series on optimizing websites for AI agents, has unequivocally stated that "structurally almost every website is broken" for this shift. His extensive testing reveals a widespread lack of readiness, underscoring a critical disconnect between how websites are currently constructed and how AI agents are designed to interact with them.

Manic highlights that the dynamic has reversed: "It started with us going to AI and asking questions. And now AI is coming to us and meeting us where we are." This reversal necessitates a fundamental rethinking of web design. While human users can intuitively navigate visually complex interfaces, AI agents rely on clear, structured data and logical pathways to understand and interact with content. Many websites, designed primarily for human visual consumption, lack the underlying semantic structure and machine-readable cues that AI agents require for efficient, error-free operation. The tasks AI agents are now capable of undertaking are far more sophisticated than simple queries, encompassing actions like checking emails, sorting them by priority, drafting qualified responses, and managing calendar appointments – tasks that require a deep understanding of context and the ability to perform chained actions across various digital touchpoints. The current web, with its often inconsistent markup, reliance on JavaScript for core functionality, and lack of universal machine-readable standards, presents a significant hurdle for these advanced agents.

Websites in Transition: From Destinations to Data Hubs

Manic’s provocative theory suggests that websites, as we traditionally understand them, could become "optional" for the end user in the near future. Instead, interactions might increasingly occur through closed system interfaces, with pages primarily "built by machines for machines." While he clarifies that this vision is a "near to mid future" scenario, not an immediate replacement for human browsing, the signals are concrete and compelling.

A significant indicator of this shift came in January 2024, when Google was granted a patent outlining a system that would allow AI to rewrite landing pages if they are deemed "not good enough." This capability, combined with Google’s Gemini browsing, suggests an emerging end-to-end AI system where humans may merely await results, with much of the intermediary interaction handled autonomously. This doesn’t imply the complete disappearance of websites; rather, it suggests the opening of "another lane" for digital engagement, akin to how mobile traffic grew without fully supplanting desktop usage. Manic estimates that within a year, elements of this agentic web could become a reality, with Google’s AI-driven page rewriting potentially becoming more widespread by 2027, if not sooner. The implication is clear: while human browsing will persist, a significant portion of digital interaction, particularly for transactional or information-gathering purposes, will likely bypass direct human engagement with a brand’s website.

The Commerce Conundrum: When Checkout Becomes Protocol

One of the most radical implications of the agentic web, as outlined by Manic, is the transformation of e-commerce. He contends that "checkout is becoming a protocol, not a page." In an agentic world, an AI could purchase on a user’s behalf without ever loading a brand’s specific checkout page. This raises critical questions about how brands will build trust and differentiate themselves when the customer may never directly see their carefully designed website or interact with their brand interface during the final transaction.

Manic argues that relying on a checkout page to build trust is fundamentally flawed. He points to the ubiquitous, often identical, Shopify checkout pages as evidence that these are "machine-readable pages that look the same for everyone, for every brand." True trust, he asserts, is established "before the user needs to pay you." This aligns with Jono Alderson’s concept of "upstream engineering," which emphasizes moving critical work and brand building efforts away from the final transactional stage and into earlier points of interaction and value delivery. For SEOs, CRO specialists, content creators, and anyone involved in website optimization, this means shifting focus from the website as the sole destination to recognizing it as merely "a part of the equation," not the entire equation. Brands must cultivate trust and distinction through their overall digital presence, product quality, customer service, and community engagement, rather than relying on the visual appeal or functionality of a final checkout page.

Strategies for Survival: Embracing Machine-First Architecture

For SEOs and brands grappling with this seismic shift, the path forward requires a fundamental re-evaluation of their digital strategy. Manic’s advice is stark: "If your website was your storefront, and it was for decades, people come to you, people do business there. It needs to be a warehouse and a storefront moving forward or you’re not going to survive." This analogy underscores the necessity for websites to function not only as customer-facing interfaces but also as robust, organized repositories of machine-readable data.

The core principle for adaptation is "machine-first architecture." This approach dictates that websites should be built for machines before they are built for humans. In practice, this means prioritizing the semantic meaning and structured data of a page. When developing a product page, for instance, the starting point should not be a design mockup or marketing copy, but rather the underlying schema – defining what the page means to a machine. Only after this foundational machine-readable layer is established should the human-centric design and content be layered on. Manic draws a direct parallel to the "mobile-first" shift, where designing for the more constrained mobile experience first often led to a better, more efficient desktop experience. Similarly, designing for the rigorous demands of AI agents first will result in a more robust and accessible experience for human users.

Moreover, optimization efforts must extend beyond the confines of a single website. In an agentic world, AI will aggregate information from diverse sources. Therefore, brands must ensure consistency and accuracy across all their online profiles and digital assets – "everything everywhere all at once." This holistic approach is crucial for AI agents to accurately represent and interact with a brand.

Manic firmly refutes the notion that optimizing for LLMs is fundamentally different from traditional SEO. He asserts that if foundational SEO practices are correctly implemented – focusing on technical health, structured data, accessibility, and clear content – the principles remain largely the same. The key difference, he notes, lies in the "speed of consequences" and their "greater" magnitude; flaws that might have gone unnoticed by human users or traditional crawlers are now rapidly exposed by sophisticated AI agents.

The Peril of "Vibe Coding" and the Power of Deep Work

In an environment constantly introducing new tools and technologies, Manic cautions against "vibe coding" – a term he uses to describe a superficial approach to problem-solving, characterized by a lack of deep understanding and a reliance on fleeting trends. For the overwhelmed SEO practitioner, his advice is refreshingly pragmatic: "It’s really fixing every single foundational thing that you have on your website or your website presence." This means prioritizing fundamental web hygiene, ensuring basic functionality even with JavaScript disabled, and addressing core technical issues before chasing the latest "shiny toy."

While AI-assisted coding and content generation tools are undeniably valuable, Manic stresses that their utility is maximized only when the user possesses a strong foundational understanding. AI can accelerate processes, but it cannot compensate for a lack of expertise. As he succinctly puts it, "You need to know what good is and what good looks like. Because AI will always give you something. If you don’t know enough about that specific thing, it will always look good from the outside. And there’s a reason why everyone is okay with vibing everything except for their own profession, because they try it and they see that the results are just horrific." This applies equally to content creation; AI can produce grammatically correct text, but discerning its factual accuracy, relevance, and true value requires human expertise.

Broader Implications and the Path Forward

The emergence of AI agents marks a critical inflection point for the digital economy. The implications extend beyond SEO and web development, touching upon brand strategy, customer experience, and even the fundamental nature of online commerce. Businesses that fail to adapt risk becoming invisible in an agent-mediated world, losing direct engagement with consumers and potentially ceding control of their brand narrative to AI systems that curate information on their behalf.

Conversely, brands that embrace machine-first architecture and a holistic digital presence stand to gain immense advantages. They can achieve greater visibility through AI agents, provide seamless experiences for users, and potentially unlock new avenues for customer acquisition and retention. This transition will likely foster a new generation of web standards and protocols, emphasizing structured data, interoperability, and robust API integrations to facilitate efficient machine-to-machine communication. The urgency for businesses to adapt is paramount, requiring strategic foresight and a commitment to foundational digital excellence.

Ultimately, the core takeaway from this evolving landscape is a call to build for machines first, and then for humans. This approach does not diminish the importance of human user experience; rather, by establishing a robust, machine-readable foundation, the subsequent human-facing layer becomes inherently more effective, accessible, and future-proof. Websites are no longer isolated digital storefronts; they are integral components of a vast, interconnected digital ecosystem. Brands that recognize and adapt to this reality, treating their websites as part of a wider, machine-intelligible network rather than the sole point of interaction, are the ones best positioned to thrive in the era of agentic AI.