The Pervasive Trust Deficit: Why Consumer Skepticism Threatens the AI Revolution

Leaders across the technology, marketing, and customer experience sectors are in near-universal agreement: trust is the bedrock upon which the successful integration of artificial intelligence (AI) will be built. This consensus, however, stands in stark contrast to the current reality of consumer sentiment, which reveals a deep and pervasive distrust of AI. New data from Forrester indicates a prevailing "distrust by default" among consumers, a sentiment that poses a significant hurdle for organizations aiming to leverage AI for enhanced customer engagement and operational efficiency.

This widespread wariness is not an isolated phenomenon but a global trend. In France, a mere 10% of consumers express trust in information provided by AI. Similarly, German consumers exhibit caution, with only 12% trusting companies that employ AI in their customer interactions. The United Kingdom presents an equally concerning picture, where over one-third of consumers perceive AI as a serious threat to societal well-being. The United States mirrors these sentiments, with just 16% of the population trusting AI-generated information and a substantial one-third believing it poses a significant societal danger.

These statistics underscore a critical "trust gap" that has the potential to derail even the most meticulously planned AI initiatives. When a significant portion of a customer base approaches AI with skepticism or outright fear, the efficacy of any AI-powered customer experience is fundamentally compromised. How can an organization expect to achieve success with AI-driven solutions if its primary audience is inherently resistant?

Navigating the New Normal: An Opportunity in Distrust

Despite the challenging landscape of consumer distrust, this prevailing skepticism presents a unique and pivotal opportunity for organizations. As businesses navigate what is arguably the most transformative technological revolution of their corporate existence, they possess the chance to actively reshape consumer expectations and experiences around AI. This is not a matter of simply adhering to compliance or ethical checklists; it necessitates a fundamental embedding of trust at the very core of AI strategy.

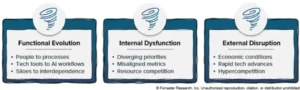

This proactive approach must permeate every stage of AI development and deployment, from the initial design of algorithms to the transparent communication of AI-driven decisions to customers. It demands the dismantling of organizational silos and the adoption of a novel framework for responsible AI practices that directly addresses consumer concerns. Organizations must actively engage with the core tenets that foster trust: transparency, fairness, privacy, and reliability. By diligently applying these principles, companies can cultivate an environment where customers feel secure and comfortable interacting with AI-enabled products and services.

The organizations that successfully navigate this trust deficit will not only mitigate significant risks but will also carve out a distinct brand advantage in an increasingly AI-dominated marketplace. This differentiation will resonate with both existing customers and potential new clientele, establishing them as pioneers in responsible AI adoption.

Addressing the Trust Deficit: A Call to Action at CX Summit EMEA

The critical nature of this challenge will be a central theme at Forrester’s upcoming CX Summit EMEA, scheduled to take place in Amsterdam from June 8-10. During the keynote session, "Distrust In The Age Of AI," I will delve into this pressing issue, presenting new research-backed insights and real-world examples. The session is designed to equip marketing, digital, and customer experience leaders with practical strategies to transform the current hurdle of distrust into a powerful organizational strength. We will explore actionable steps for implementing responsible AI practices that genuinely resonate with consumers, fostering a foundation of confidence and acceptance.

For those in the United States, similar insights will be delivered by my colleague, Jess Lloyd. She will present the keynote at CX Forum East in New York City and CX Forum West in San Francisco later in June, providing regionally relevant perspectives and actionable guidance.

The era of AI in customer experience is no longer a future prospect; it is an immediate reality. Its ultimate success hinges on the ability of organizations to earn and maintain the trust of their customers. Whether the initiative involves deploying an AI-powered chatbot, personalizing customer journeys through machine learning, or developing the next generation of intelligent products, attendees of these sessions will gain actionable insights. These insights will empower them to ensure their AI innovations are met with enthusiasm and acceptance, rather than skepticism and apprehension. For any marketing, digital, or CX leader eager to harness the full potential of AI, these forthcoming keynotes offer an indispensable opportunity to gain the knowledge and tools necessary for success.

Supporting Data and the Evolution of Consumer Trust

The contemporary landscape of AI adoption is marked by a significant disconnect between technological advancement and consumer perception. While businesses are rapidly integrating AI into their operations, the public remains largely unconvinced of its inherent benefits and safety. This sentiment is not new, but it has been amplified by a series of high-profile incidents and ongoing societal debates surrounding data privacy, algorithmic bias, and the potential for job displacement.

Historical Context of Trust in Technology:

Consumer trust in technology has always been a dynamic and evolving construct. Early adoption of new technologies, from the internet to mobile devices, was often met with a mixture of excitement and apprehension. However, the pervasive nature of AI and its potential to deeply influence decision-making processes, personal lives, and societal structures has elevated the stakes considerably. The widespread availability of information, coupled with the increasing sophistication of AI’s applications, has empowered consumers to be more critical and discerning.

Forrester’s Research: A Deeper Dive:

Forrester’s data, as highlighted, paints a stark picture. The "distrust by default" phenomenon suggests that consumers are no longer willing to give AI the benefit of the doubt. This initial skepticism can be attributed to several factors:

- Lack of Understanding: Many consumers lack a fundamental understanding of how AI works, leading to a reliance on sensationalized media portrayals or speculative narratives. This knowledge gap can foster fear and uncertainty.

- Privacy Concerns: The collection and utilization of vast amounts of personal data, often a prerequisite for AI functionality, raise significant privacy concerns. Consumers worry about how their data is being used, who has access to it, and whether it is being adequately protected.

- Algorithmic Bias: Reports of AI systems exhibiting bias based on race, gender, or other demographic factors have eroded public confidence. When AI systems make unfair or discriminatory decisions, it directly impacts trust.

- Job Displacement Fears: The automation capabilities of AI have fueled anxieties about widespread job losses, leading to a negative perception of the technology’s broader societal impact.

- Transparency Deficits: The "black box" nature of some AI algorithms makes it difficult for consumers to understand the reasoning behind AI-driven decisions, further contributing to distrust.

Timeline of AI Integration and Emerging Concerns:

The rapid acceleration of AI development and deployment over the past decade has coincided with a growing awareness of its ethical and societal implications.

- Early 2010s: Increased focus on machine learning and big data analytics, with initial consumer-facing applications like recommendation engines and voice assistants beginning to emerge.

- Mid-2010s: AI starts to permeate more critical areas like finance, healthcare, and criminal justice, leading to early discussions about bias and fairness.

- Late 2010s – Early 2020s: Heightened public awareness of AI’s potential impact, fueled by events such as the Cambridge Analytica scandal, debates around autonomous vehicles, and the increasing use of AI in surveillance and hiring processes. This period has seen a significant rise in consumer skepticism and regulatory scrutiny.

- Present Day: The current juncture is characterized by the widespread acknowledgment of the trust deficit, prompting organizations to prioritize responsible AI development and ethical deployment as a strategic imperative.

Broader Impact and Implications for Businesses

The implications of this pervasive consumer distrust extend far beyond individual AI implementations. For businesses, it represents a fundamental challenge to their ability to innovate, engage customers, and maintain a competitive edge.

Risk Mitigation:

Failure to address the trust deficit can lead to significant reputational damage, regulatory penalties, and customer churn. Organizations that are perceived as irresponsible or untrustworthy in their use of AI risk alienating their customer base and facing increased scrutiny from consumer advocacy groups and regulatory bodies.

Brand Differentiation:

Conversely, organizations that proactively prioritize trust in their AI strategies can differentiate themselves in a crowded market. By demonstrating a commitment to transparency, fairness, and privacy, businesses can build a reputation as responsible AI innovators, fostering deeper customer loyalty and attracting new customers who value ethical technology.

Strategic Imperative:

In this AI-driven era, trust is not merely a desirable attribute; it is a strategic imperative. Businesses that fail to build and maintain consumer trust risk being left behind as the technological revolution progresses. The key to success lies in a holistic approach that integrates trust-building principles into every facet of AI development and deployment. This requires a cultural shift within organizations, fostering collaboration between technical teams, ethics boards, legal departments, and customer-facing roles.

The journey toward building trust in AI is ongoing and complex. It demands continuous learning, adaptation, and a genuine commitment to placing the customer’s well-being and confidence at the forefront of all AI-related endeavors. The upcoming Forrester events offer a crucial platform for leaders to convene, share insights, and collectively chart a course toward a future where AI and consumer trust can coexist and thrive.