Most IT leaders worry their cloud spending isn’t keeping up with their AI needs, let alone closing their cloud maturity gap for AI success.

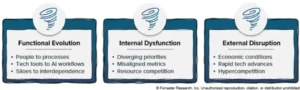

A significant disconnect exists between the burgeoning demands of artificial intelligence and the foundational cloud infrastructure required to support it, leaving a majority of IT leaders concerned about their organizations’ readiness for AI-driven innovation. This growing apprehension is fueled by a perceived inadequacy in cloud investment and proficiency, potentially jeopardizing crucial AI initiatives and broader digital transformation efforts.

Recent findings from a comprehensive report by NTT DATA highlight a stark reality: only a mere 14% of organizations have achieved what the report defines as "cloud evolved." This optimal state signifies a mature cloud strategy where cloud-led innovation is actively accelerating business transformation, and cloud-native services are deeply integrated into core operational strategies. These are the organizations best positioned to harness the full potential of AI.

Further compounding the challenge, an additional 34% of senior IT decision-makers surveyed by NTT DATA describe their cloud approach as "mature." This designation indicates a broad and strategic application of cloud services across various business units, underpinned by robust governance frameworks, established best practices, and the capability to manage scalable workloads. While a significant step forward, this segment still falls short of the "evolved" status, suggesting room for enhancement in their cloud readiness for advanced AI deployments.

The stark implication of these figures is that more than half of all organizations are lagging behind the necessary cloud maturity curve to effectively leverage AI. A quarter of these are categorized as "cloud enabled," suggesting a more basic adoption of cloud services, while nearly another quarter are identified as "cloud novices," indicating minimal or nascent engagement with cloud technologies. This substantial gap in cloud proficiency presents a formidable barrier to entry and scaling for many organizations aspiring to integrate AI into their operations.

The urgency to deploy AI solutions is leading many IT leaders into a precarious financial strategy: diverting funds from essential cloud infrastructure and operations to fuel AI projects. This "robbing Peter to pay Paul" approach, as articulated by industry experts, carries significant risks. Nearly nine in ten IT leaders (88%) surveyed express deep concern that insufficient investment in cloud infrastructure will directly imperil their AI, cloud-native development, and modernization initiatives. This anxiety is amplified by the fact that, despite AI inherently driving increased cloud utilization, a significant 84% of respondents report that their cloud spending has remained stagnant over the past year. This stagnation, coupled with escalating AI ambitions, creates a critical imbalance.

Charlie Li, president and global head of cloud and security at NTT DATA, elaborates on this "robbing Peter to pay Paul" phenomenon. He explains that as organizations reallocate budgets to fund AI pilots and experiments, the foundational cloud infrastructure—an indispensable component of any successful AI endeavor—is often neglected. "The cloud side is not getting the money," Li states. "The frustration is, ‘In order to do AI, I’ve got to spend money here, and I have no money here, but I’ve been throwing in a lot of money for AI. So I end up wasting a lot of money doing a bunch of pilots.’"

Li further illustrates this point by noting that some NTT DATA clients possess the financial capacity to initiate dozens of AI pilot programs. However, these CIOs have not secured any incremental budget allocation for cloud services to support these ambitious ventures. "The CIO is sitting there going, ‘Wait a minute, I’ve got to do these things in the cloud side in order to be able to do AI, but I have no money to do it,’" he emphasizes.

NTT DATA views cloud services as intrinsically vital for AI development due to the immense computational power they provide. "You need humongous scales of data and processing power," Li explains. "Those two things are what’s really led to the advent of the gen AI trend that we actually see today. You cannot do this with 100 servers sitting in your own data center." The sheer scale of data processing and storage required for advanced AI models, particularly generative AI, necessitates the elastic scalability and robust infrastructure that cloud platforms offer. On-premises solutions, even those with substantial capital investment, often struggle to match the dynamic resource allocation and processing capabilities of major cloud providers.

Beyond computational power, an evolved cloud strategy is also crucial for the successful implementation of AI projects by fostering the necessary data maturity. Li posits, "If you don’t have a mature cloud strategy or implementation, your data is still all over the place. If you’ve got junk data, if you have poor data governance, none of your trained models are going to be accurate." Effective AI relies on clean, well-governed, and accessible data. A fragmented or immature cloud environment often mirrors this disarray in data management, leading to inaccurate training data and, consequently, unreliable AI models.

The challenge of scaling AI on "messy clouds" is a sentiment echoed by other industry experts. Adnan Masood, chief AI architect at digital transformation solutions provider UST, states, "I’ve yet to see an AI program scale cleanly on top of a messy cloud estate. Teams can get a demo running that way, sure. Production is where weak data governance, brittle integrations, poor observability, and runaway compute costs show up." Masood’s observation underscores the critical distinction between demonstrating AI capabilities in a controlled environment and achieving reliable, scalable performance in a production setting. The inherent complexities of data governance, system integration, performance monitoring, and cost management become acutely apparent when AI initiatives move beyond the pilot phase.

Masood confirms that the NTT DATA survey’s findings align with his observations in the market. While acknowledging that on-premises AI projects can be viable in specific, limited scenarios—particularly for highly regulated industries that require stringent data sovereignty—he maintains that most organizations stand to benefit significantly from a cloud-centric approach. "Enterprises can get some AI footing without a strong cloud strategy—usually a contained assistant, internal search layer, or a narrow automation flow—but the odds of scaling it cleanly are low," Masood elaborates. He adds that for on-premises AI to function effectively, the management layer must be exceptionally mature, encompassing AI-ready data pipelines, robust orchestration, efficient model serving, comprehensive observability, stringent security protocols, resilient cyber-recovery capabilities, and meticulous governance. He concludes that these are precisely the areas where most enterprises are still lagging.

The journey from an AI pilot to a fully operational AI system is often demarcated by an organization’s cloud maturity. Quais Taraki, CTO at AI and data platform provider EnterpriseDB, explains that while cloud maturity alone is not a panacea, companies that have progressed further in their cloud adoption journey typically possess more modern data architectures, enhanced governance, improved interoperability across diverse environments, and the underlying infrastructure capable of supporting production-scale workloads without faltering under the demands of real-time concurrency and substantial data volumes.

However, Taraki cautions that substantial cloud spending does not automatically translate into cloud maturity. He notes that some organizations that have invested heavily in cloud technologies still encounter difficulties in transitioning AI pilots to production. This stems from an inadequate data architecture that cannot support the real-time, multi-environment workloads demanded by production-grade AI. "Moving workloads to the cloud does not simplify an architecture that was fragmented before you moved it," Taraki observes. "What we see consistently is that cloud investment helps when it creates a more unified, flexible, and governed foundation where data and AI can operate together without silos." The key is not merely migrating to the cloud, but doing so in a manner that consolidates and optimizes data and AI workflows.

Conversely, cloud investments can inadvertently hinder AI initiatives. Taraki points out instances where cloud adoption increases operational complexity, particularly when pricing models for advanced analytics and AI solutions are unpredictable, making scalable deployment financially challenging. Furthermore, vendor dependencies can constrain an organization’s ability to adapt to evolving AI requirements. "When the data and system governance architecture of the cloud environments are fragmented, teams spend too much time moving data across systems, absorbing unpredictable cost, and managing operational friction that compounds the moment AI becomes agentic and starts acting on data rather than just querying it," he warns. This friction can lead to significant cost overruns and performance degradation, especially as AI systems become more autonomous and interact directly with data.

The implications of this widespread cloud maturity gap for AI success are far-reaching. Organizations that fail to bridge this divide risk falling behind competitors who are effectively integrating AI into their operations. This could manifest in several ways: a reduced ability to innovate and develop new AI-powered products and services, a diminished capacity to optimize existing business processes through AI-driven automation and insights, and an increased vulnerability to market disruptions. The race for AI dominance is intrinsically linked to the robustness of an organization’s cloud infrastructure and its strategic approach to cloud adoption.

Furthermore, the current economic climate, marked by inflationary pressures and a cautious approach to capital expenditure, may exacerbate the problem. IT leaders may find it increasingly difficult to secure the necessary budget for both cloud modernization and AI development, forcing difficult trade-offs. This could lead to a scenario where organizations invest in AI capabilities that remain largely experimental or confined to departmental use cases, failing to achieve enterprise-wide impact.

Industry analysts suggest that a proactive and strategic approach to cloud strategy is paramount. This involves not only increasing cloud spend but also ensuring that investments are directed towards building a cohesive, secure, and data-centric cloud environment. Organizations need to prioritize initiatives that enhance data governance, streamline data pipelines, and foster interoperability between different cloud services and on-premises systems. A clear roadmap for cloud maturity, aligned with specific AI objectives, is essential for realizing the transformative potential of artificial intelligence. The findings from NTT DATA serve as a critical wake-up call, urging IT leaders to re-evaluate their cloud strategies and investments to ensure they are not just chasing the AI trend, but building the essential foundation for its sustainable success.