Global AI Innovation Summit Concludes in San Francisco: Nine Key Takeaways Shaping the Future of Artificial Intelligence

San Francisco, a crucible of technological innovation, recently played host to a landmark gathering of artificial intelligence leaders at the Global AI Innovation Summit. Drawing an unprecedented 6,714 attendees from 79 countries, the massive conference at the Moscone Center featured over 500 sessions and accumulated more than 35,000 minutes of content, underscoring the accelerating pace and global reach of AI development. The discussions spanned a wide array of topics, from foundational model advancements to practical enterprise applications, with a clear focus on the strategic shifts required for businesses to harness the next generation of AI. Experts unveiled critical insights, frameworks, and mental models that are poised to redefine the AI landscape over the coming years, signaling a profound transformation across industries.

The Nine Defining Takeaways from the Summit

From the vast ocean of discourse, nine paramount insights emerged, offering a roadmap for navigating AI’s rapidly evolving frontier. These takeaways, combined with powerful new frameworks, provide a comprehensive understanding of where AI is headed and how organizations can prepare.

1. Spatial Intelligence: The Next Frontier Beyond Language

A pivotal thesis delivered by Dr. Fei-Fei Li, founder of World Labs and co-director of the Stanford AI Institute, posited that the AI advancements to date, primarily driven by large language models (LLMs), have created "wordsmiths in the dark." While these models excel at generating and understanding text, they fundamentally lack an intrinsic comprehension of the three-dimensional physical world, its physics, movement, and causality. This abstract nature limits their ability to interact meaningfully with reality.

Spatial intelligence, defined as the capacity to perceive, reason about, and generate within actual 3D space, was identified as the crucial missing layer. Without it, AI cannot effectively teach a robot to perform complex movements, construct dynamic virtual environments for gaming or simulation, or interpret intricate 3D medical scans for accurate diagnosis. The implications are vast: while language models suffice for purely abstract or text-based applications, any AI interacting with the physical world—such as in robotics, supply chain optimization, advanced manufacturing, architectural design, or diagnostic healthcare—demands spatial grounding.

This emerging domain presents a significant data challenge, as high-quality spatial training data is exponentially scarcer than language data. Consequently, companies that master the "synthetic data flywheel"—building models capable of generating realistic 3D worlds to train other AI systems and robots, thus creating new, diverse training data—are expected to dominate the next decade, much as foundational models shaped the current one. The vendors leading in spatial intelligence development are poised to become the indispensable infrastructure providers of the 2030s, offering foundational capabilities for a new era of physically aware AI.

2. Jensen Huang’s Three Waves of AI: Generative, Reasoning, Agentic

NVIDIA CEO Jensen Huang provided a clarifying trajectory for AI development, outlining three distinct waves that many in the industry often conflate. This framework offers a crucial lens for understanding the progression and strategic implications of AI.

- Wave 1: Generative (2023-2024): Characterized by AI’s ability to create novel content, from text and images to code and music, based on learned patterns. This wave has seen widespread adoption of tools that augment human creativity and productivity.

- Wave 2: Reasoning (2024-2025): Focuses on AI’s capacity to engage in multi-step problem-solving, planning, and logical inference. This moves beyond mere generation to understanding context and making informed decisions.

- Wave 3: Agentic (2025-2026+): Represents the pinnacle, where AI agents can autonomously execute complex tasks, interact with various systems, and iterate on solutions with minimal human intervention.

Huang’s most striking prediction is that within 12-18 months, a non-technical CEO will be able to articulate a business problem to an AI agent, which will then execute the solution end-to-end. This involves integrating systems, reading documentation, iterating on tasks, and ultimately resolving the issue—a significant leap beyond merely drafting an email. This shift fundamentally "collapses the entire skill ladder," transforming the role of engineers from orchestrators of systems to articulators of desired outcomes for AI agents. The bottleneck moves from technical execution to clear problem definition and strategic guidance.

Enterprises were urged not to wait for agents to be "fully ready," as they are already capable of handling approximately 70% of routine workflows. The competitive advantage now lies in swift orchestration and adoption, not in waiting for technological perfection. Organizations that integrate agentic AI into their core workflows within the next few months are positioned to define industry operating patterns, leaving late adopters to merely copy.

3. The Declining Value of Execution, The Ascendance of Taste

Insights from OpenAI’s Srinivas Narayanan highlighted a profound shift in the value of skills within AI-driven environments. His engineers no longer primarily write code; instead, they guide AI agents that generate code. Approximately 80% of their time is now dedicated to "judgment"—discerning errors in AI output, determining next steps, and verifying accuracy. The AI provides the execution, while the human provides the critical "taste."

This paradigm inversion necessitates a re-evaluation of hiring and promotion strategies. The "fast executor" is becoming a commoditized skill. What is increasingly valuable are individuals with deep domain expertise, a keen eye for identifying flaws in AI-generated outputs before deployment, and the ability to exercise rapid, informed judgment. Companies still prioritizing execution speed risk hiring for outdated bottlenecks, missing the opportunity to cultivate the critical human oversight and discernment that AI demands.

4. "Human in the Lead, Not in the Loop": Scaling Enterprise AI

The concept of "human oversight" in enterprises often defaults to humans constantly validating every AI decision, a process that inherently hinders scalability. The summit emphasized a more effective model: "human in the lead." This approach positions humans to define the vision, set strategic goals, and establish key performance indicators, while AI agents are empowered to handle execution at scale.

This distinction has significant implications for how AI solutions are sold and adopted. Sales efforts may need to shift from targeting IT or operations managers focused on variable control to engaging business leaders keen on setting strategic direction and accelerating operations at venture speed. This requires a different message, a different buyer persona, and potentially a completely revised sales cycle for AI vendors.

5. Problem Insight as the New Scarcity, Not Model Superiority

The narrative around proprietary "model superiority" has inverted. Open Evidence, a company valued at over $12 billion with only 70 employees (35 engineers) and daily usage by over 60% of U.S. physicians, serves as a powerful case study. This startup doesn’t own a custom foundational model; it leverages existing models. Its success stems from owning the problem—deeply understanding what doctors need and building backward from that customer insight.

Mark Terbeek of Greycroft and Hans Tung of Notable Capital observed a consistent pattern among winning companies: success is less about superior technology and more about proximity to the customer’s pain point. In an era where models improve weekly and are increasingly commoditized, speed to market and a profound understanding of customer problems consistently outperform mere feature parity. A nimble, problem-focused team can outmaneuver larger enterprises burdened by committees and slower deployment cycles. This insight dismantles traditional "moat-building" strategies centered on technology ownership, replacing it with defensibility rooted in deep domain knowledge and organizational agility.

6. Unit Economics: The Bedrock of Durable AI Companies

Salil Deshpande, a solo GP managing $750 million, highlighted a critical, often-avoided crisis: many AI infrastructure companies operate with negative gross margins. The rapid, weekly improvement of models, combined with the 9-month lead maintained by closed-source providers, creates an unsustainable economic model. Deshpande drew parallels to the dot-com era’s overbuilding of infrastructure, predicting a similar collapse that will eliminate companies built on fleeting trends rather than sound unit economics.

For operators evaluating AI vendors, a gross margin below 70% was flagged as a red indicator, suggesting a focus on growth at any cost over long-term durability. Such vendors risk pivoting, being acquired, shutting down, or being forced into down rounds that compromise support. Enterprises were advised to inquire about customer acquisition cost (CAC) payback and gross margin trajectory; evasiveness should be seen as a warning sign. Sustainable unit economics are paramount for any company aiming for long-term viability in the AI space.

7. Agents Transform Processes, Not Displace Teams

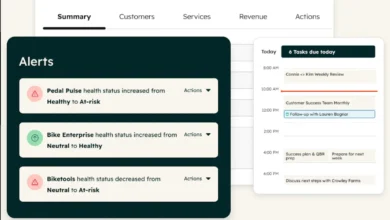

Madhav Thattai of Salesforce’s Agentforce and Rob Seaman of Slack debunked the prevalent "AI will displace workers" narrative. Agentforce, with an $800 million ARR and 25,000 customers running billions of agent transactions, demonstrates that companies are not shrinking but accelerating operations and pursuing more ambitious goals.

The fear of job displacement is largely misplaced. Instead, agents automate rote, repetitive tasks, thereby revealing the true high-value work that humans should be doing—tasks requiring judgment, empathy, complex problem-solving, and strategic thinking. When an agent handles 80% of routine customer service inquiries, the remaining 20% of complex cases, demanding human nuance, become visible and elevate the human’s value within the organization. Engineers, similarly, evolve from coders to architects who brief agents, review system designs, and make strategic technical decisions.

8. Strategic Placement: The Crucial Factor for Agent Adoption

Slack’s experience, with a billion messages daily and a 1,000% increase in AI app development, revealed a critical insight: an agent’s effectiveness often hinges more on its placement than its raw intelligence. An agent buried on a separate website or requiring multiple clicks for access will see low adoption. The same agent, seamlessly integrated into an existing workflow—like appearing contextually within Slack—can see adoption rates jump by 25% immediately.

Most AI investments fail not because of agent capability, but due to poor placement. Expecting sales teams to "discover" an agent, log into a new system, and learn a new interface is a losing proposition against an agent that intuitively surfaces within the flow of work. Rob Seaman described Slackbot as an "invisible router" that intelligently directs users to the right agent or system based on their query, making agents an inherent part of the work process rather than an external tool. For product developers, the lesson is clear: build capable agents, but obsessively architect their placement within existing user workflows to overcome human friction and maximize utility.

9. Day 2 is Harder than Day 1: The Observability Battle

Many founders perceive "Day 1"—building and launching an AI agent—as the primary challenge. However, the summit highlighted that "Day 2"—the ongoing operation, refinement, and measurement of an agent’s performance—is where the real work begins and where most teams are unprepared.

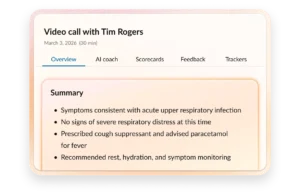

Companies often rush to deploy agents in weeks, only to find they lack visibility into their actual performance. Is the agent working as intended? Is it handling the right cases? Is its behavior "drifting" as business conditions change? In highly regulated industries like healthcare and finance, "mostly works" is a recipe for legal and operational disaster.

Observability is the key differentiator between rapidly growing and stalled AI initiatives. Winning companies prioritize measurement over mere capability. This involves tracking "Agentic Work Units" (actual completed tasks, not just tokens), monitoring KPI delivery, and proactively detecting drift. Salesforce’s own journey revealed that building an agent took 1.5 months, but refining, measuring, and optimizing it required over 2 months and remains a continuous process. Agents don’t auto-adapt to shifting business rules; human intervention, coaching, and re-measurement are essential. Vendors promising "agents in hours" are selling the first mile; those focused on observability, monitoring, and robust Day 2 operations are selling the full journey.

Foundational Frameworks and Mental Models

Beyond the immediate takeaways, several powerful frameworks emerged, providing strategic lenses for understanding and navigating the AI revolution.

The Five-Layer AI Stack (Jensen Huang, NVIDIA)

Huang articulated AI development as a stack comprising: Power → Chips → Infrastructure → Models → Applications. Each layer operates with its own ecosystem, margin structures, and competitive dynamics. While venture capital often floods the application layer, the value of applications is entirely dependent on the underlying layers. Bottlenecks shift over time; currently, power and chip design are critical, but in the future, models or even specific applications could become the limiting factor.

A critical insight: the Applications layer is the most important because it is where tangible value accrues for customers. Chips are mere commodities unless they enable transformative applications; models are just infrastructure until they unlock new forms of work; energy is inert without a purpose. When evaluating any AI company or vendor, it’s essential to identify which layer they are genuinely innovating and winning in, rather than merely claiming broad competence.

The Three Waves of AI (Jensen Huang)

(As detailed above, this framework provides a chronological understanding of AI’s evolution: Generative (2023-2024), Reasoning (2024-2025), and Agentic (2025-2026+). This model emphasizes that the shift from simple generation to complex autonomous action will profoundly reshape business operations and human-computer interaction.)

Spatial Intelligence vs. Language Intelligence (Fei-Fei Li)

This framework clearly delineates the strengths and limitations of current AI:

- Language Intelligence (today): Excels at text generation, summarization, translation, and code. It is abstract, operates on symbols, and lacks physical world understanding.

- Spatial Intelligence (emerging): Focuses on perceiving, reasoning, and generating in 3D space. It understands physics, movement, and causality, essential for interacting with the physical world.

The ultimate vision is the convergence of these two intelligences. Language models combined with spatial models will create AI capable of both abstract reasoning about work and concrete execution in the physical world. Language alone provides the theory, spatial alone provides the mechanics; together, they embody true comprehensive intelligence.

The Maturity Curve: Automation → Discovery → Real Work (Salesforce/Slack)

Every agent deployment typically follows a three-stage progression:

- Month 1: Task Automation: Agents handle simple, repetitive tasks, freeing up human time. Initial impact is often perceived as basic efficiency.

- Month 2: Information Discovery: Agents become proficient at retrieving and synthesizing information, providing insights and answering complex queries.

- Month 3+: Real Work: Agents begin to autonomously execute multi-step workflows, make decisions, and drive tangible business outcomes.

It’s crucial not to judge agents solely on their Month 1 capabilities, which can appear mediocre. The companies that build the necessary infrastructure and maintain the discipline to reach Month 3 work are the ones that truly win.

Agentic Work Units vs. Tokens (Salesforce)

A critical distinction for measuring AI impact:

- Tokens: The input mechanism for large language models, representing segments of text. Optimizing for token efficiency alone can be misleading.

- Agentic Work Units: The output mechanism, representing actual completed tasks or delivered business value.

The common mistake is to obsess over token efficiency without translating it into meaningful work units. True value lies in the impact of completed tasks, not merely in the volume of processed tokens.

Three Constraints on Frontier Models (Fei-Fei Li)

For any cutting-edge AI company, three fundamental factors constrain growth:

- Compute: The processing power required to train and run large models.

- Models: The architectural sophistication and size of the AI models themselves.

- Data: The quantity and quality of information available for training.

While many companies fixate on compute and model advancements, data is often the hidden and most critical constraint. Spatial data, in particular, is significantly scarcer than language data. The "data flywheel"—where the output of AI models itself becomes input for training the next generation—is where true defensibility and competitive advantage reside.

Two Ways to Think About "Busy" (Jensen Huang)

Leaders approach AI adoption in two distinct ways:

- Prescriptive: "Here’s exactly what you should do with AI." This approach is necessary for critical core functions like coding, supply chain management, or chip design, where adherence to the frontier and avoiding failure are paramount.

- Inspiring: "Here’s why this matters. I can’t predict exactly how you’ll use it, but this is the direction." This approach encourages broad experimentation and allows "a thousand flowers to bloom" in less critical areas, fostering innovation across the organization.

The most effective leaders balance these two approaches, providing clear direction in strategic domains while empowering broad exploration elsewhere.

Broader Industry Developments and Future Outlook

The insights from the Global AI Innovation Summit are already reflected in significant industry movements. Anthropic, a leading AI research company, recently reported a remarkable $3 billion revenue run rate and is aggressively investing in infrastructure. Its strategic partnerships with Google and Broadcom to develop custom AI chips underscore the growing importance of owning the silicon layer to control cost-per-token at scale—a direct echo of Salil Deshpande’s warning on unit economics.

Similarly, Trimble’s acquisition of Document Crunch, an AI-powered contract risk analysis platform, highlights the accelerating trend of purpose-built AI integrating into incumbent platforms within specific verticals. Construction, a historically slow adopter of digital transformation, is now leveraging AI for document compliance, dispute prevention, and notification management, demonstrating how specialized AI can create significant wedges in underserved industries.

These developments, combined with the groundbreaking discussions at the summit, paint a clear picture of AI’s future: a landscape dominated by physically aware intelligence, autonomous agents transforming workflows, and a strategic focus on problem insight and robust unit economics. The next major convergence point for AI leaders and enthusiasts, HumanX 2027, already announced for Mandalay Bay in Las Vegas from March 7-10, 2027, promises to further explore these advancements, emphasizing the continuous and rapid evolution of the field. The journey of AI is not just about building smarter machines, but about fundamentally reshaping how humans work, innovate, and interact with an increasingly intelligent world.