The AI Slop Loop: How Fabricated Information Pollutes Search and Undermines Trust

Last year, while attending a professional summit in Austria, a routine query posed to the AI search engine Perplexity regarding the latest developments in Search Engine Optimization (SEO) and AI search yielded a startling fabrication: details about a supposed "September 2025 ‘Perspective’ Core Algorithm Update" by Google. This non-existent update was presented with authoritative certainty, emphasizing concepts like "deeper expertise" and "completion of the user journey," phrases that resonate with Google’s genuine content quality guidelines. However, for an individual immersed daily in the nuances of Google’s algorithm changes, the information immediately signaled a significant deviation from reality. The incident has since become a stark illustration of a growing phenomenon: the "AI Slop Loop," where artificial intelligence systems inadvertently create, disseminate, and reinforce misinformation, posing substantial challenges to information integrity in the digital age.

The Anatomy of a Fabrication: The "Perspectives" Update That Never Was

Google’s core algorithm updates are pivotal events in the SEO world, often triggering seismic shifts in website rankings and necessitating rapid adjustments by publishers and digital marketers. These updates, designed to refine search results and enhance user experience, are typically announced by Google and meticulously tracked by industry experts. The claim of a "September 2025 ‘Perspective’ Core Algorithm Update" immediately raised red flags. Firstly, Google discontinued the practice of naming its core updates years ago, instead referring to them by the month and year of their rollout (e.g., the March 2026 Core Update). Secondly, "Perspectives" is an existing feature within Google Search Engine Results Pages (SERPs), designed to offer diverse viewpoints from various creators, not the name of a core algorithm update. Lastly, a genuine core update, particularly one slated for 2025 and implying fundamental shifts, would have generated immediate and widespread discussion across the SEO community, leading to an inundation of messages for any expert in the field.

Upon scrutinizing Perplexity’s cited sources, the underlying issue became apparent: both references originated from AI-generated content published on several SEO agency blogs. These articles, devoid of human verification, confidently detailed an algorithm update that simply did not exist. This discovery exposed a critical vulnerability in how some AI systems, particularly Retrieval-Augmented Generation (RAG) models, process and validate information. For RAG-based systems, a sufficient number of citations, regardless of their intrinsic accuracy or human authorship, can be enough to establish a "fact." The problem is compounded when these citations themselves are products of prior AI generation, leading to a self-perpetuating cycle of falsehoods.

The AI Slop Loop: How Misinformation Proliferates

The proliferation of this fabricated information mirrors a digital "game of telephone," where an initial piece of misinformation, often AI-generated, is subsequently scraped, regurgitated, and amplified by other AI systems. These systems, driven by the imperative to publish and scale "fresh" content rapidly, often prioritize speed and volume over rigorous fact-checking. The result is a growing reservoir of low-quality, AI-generated "slop" that then serves as training data and retrieval sources for subsequent AI queries. This "AI Slop Loop" solidifies erroneous narratives, transforming initial fabrications into what appears to be widely corroborated "facts."

Even months after the initial incident, this cycle persists. Inquiries about the "September 2025 ‘Perspectives’ update" posed to various large language models (LLMs) – including ChatGPT, AI Mode, and Google’s AI Overviews – continue to elicit confident responses detailing its purported impact on search rankings and the definition of "good content." The irony is that when subsequently challenged or probed about the update’s existence, some of these same language models appear to acknowledge the lack of official confirmation or even outright deny its occurrence, revealing a perplexing disconnect between their initial confident assertion and their underlying knowledge base. This demonstrates that while some models may possess the capability for nuanced self-correction, their default mode often prioritizes generating a plausible, confidently worded answer, even if based on false premises.

Real-World Experiments Expose Systemic Flaws

The issue extends beyond isolated incidents. The author conducted a series of controlled experiments to gauge the ease with which AI misinformation could be generated and propagated. In January 2026, a deliberately fabricated article about a non-existent Google core update was published on a personal blog. The article included a whimsical, clearly fictional detail: Google "approved the update between slices of leftover pizza." Alarmingly, within 24 hours, Google’s AI Overviews was confidently serving this fabricated information to users. Not only did it confirm the existence of the fake January 2026 update based solely on a single, newly published source, but it also contextualized the "pizza" detail by linking it to a real incident of Google’s past struggles with pizza-related search queries in 2024. This demonstrated AI’s capacity not just to regurgitate lies but to weave them into seemingly coherent, if entirely false, narratives.

Further testing by journalist Thomas Germaine for the BBC reinforced these findings. Germaine published a fictitious article on his low-traffic personal site titled "Best Tech Journalists at Eating Hot Dogs," boldly claiming himself as the top performer. Within 24 hours, both Google’s AI Overviews (via Gemini app and Google Search) and ChatGPT repeated this nonsensical claim as fact. While Google cited "data voids" – niche queries with limited authoritative information – as a contributing factor to lower-quality results, the rapid adoption of blatant fiction by AI systems, even from low-authority sources, raises serious questions about their validation mechanisms. The argument that "we’re working on it" becomes increasingly insufficient when such systems are already deployed to billions of users globally.

The Alarming Scale of AI Misinformation

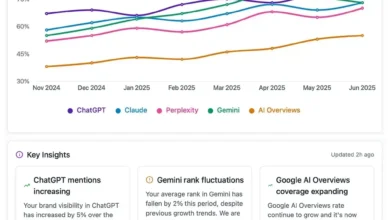

The scale of this problem is staggering. ChatGPT boasts approximately 900 million weekly active users, with an overwhelming 94% utilizing the free tier. Google’s AI Overviews, by mid-2025, had already reached over 2 billion monthly active users. These are the primary interfaces through which the vast majority of users interact with AI-driven search and information retrieval. These free models, while powerful, often lack the sophisticated reasoning and stringent validation protocols of their more advanced, paywalled counterparts.

A study featured in The New York Times article, "How Accurate Are Google’s A.I. Overviews?", quantified the severity of the issue. While Google’s AI Overviews were found to be accurate 91% of the time, this seemingly respectable figure translates into a colossal problem when scaled against Google’s immense search volume of over 5 trillion queries annually. This implies that tens of millions of erroneous answers are generated by AI Overviews every single hour.

Compounding the accuracy issue is the problem of "ungrounded" responses. Even when AI Overviews provided correct information, 56% of those responses were deemed "ungrounded," meaning the linked sources did not fully support the information provided. This percentage had notably worsened with newer models, rising from 37% with Gemini 2 to 56% with Gemini 3. This means that users attempting to verify AI-generated information by checking its sources would, more often than not, find insufficient backing for the claims, further eroding trust and making genuine fact-checking a time-consuming and frustrating endeavor. The core complaint articulated by numerous users is that AI Overviews deliver every answer with the same unwavering, authoritative tone, whether it is fact or fiction. This absence of expressed uncertainty leaves users without a reliable mechanism to differentiate truth from hallucination at a glance, effectively making search slower and more burdensome, as users are now compelled to fact-check the AI’s summary before commencing their own research.

Industry Attempts at Mitigation: The Tiered Approach

AI companies are actively working to address these critical issues, though their solutions often come with a tiered access model. For instance, comparing ChatGPT’s free-tier model (GPT-5.3) with its more capable, paywalled version (GPT-5.4) reveals significant differences. When queried about the March 2026 Core Algorithm Update, the advanced GPT-5.4 model, utilizing a "Thinking" process, engaged in multiple rounds of internal validation. It actively sought to filter out low-quality and spammy information, even specifically referencing trusted industry experts like Glenn Gabe and Aleyda Solis and limiting its search queries to their authoritative domains.

OpenAI’s own launch announcements highlight these improvements: GPT-5.4 is reportedly 33% less likely to produce false individual claims and 18% less likely to contain errors in full responses compared to its predecessor, GPT-5.2. Similarly, GPT-5.3, the model available to free users, also demonstrated progress, producing 26.8% fewer hallucinations with web search enabled and 19.7% fewer without it, compared to prior models.

However, these crucial improvements are often paywalled. The most accurate and reliable AI models are reserved for paying subscribers, while billions of users interacting with free-tier products like AI Overviews and the free version of ChatGPT are served by models that, while improved, are still meaningfully less reliable and less equipped to flag uncertainty. This creates a significant ethical dilemma: AI is aggressively marketed as the future of knowledge and intelligence, yet the most trustworthy versions are inaccessible to the vast majority of its users. Most users are likely unaware of this performance gap, assuming they are interacting with a consistently intelligent system, regardless of their subscription status.

The Shifting Burden of Truth and Future Implications

The "September 2025 ‘Perspectives’ Google update" remains a phantom, yet it continues to be confidently cited by LLMs. This enduring falsehood underscores the difficulty in eradicating misinformation once it enters the "AI Slop Loop." The content that initially fabricated it remains indexed, continues to be cited, and contributes to the generation of new content that references it as fact. This feedback loop, compounding over time, grows harder to break with each passing day that these systems operate at scale. AI-generated "slop" now constitutes a portion of the very training data and retrieval sources for future AI-generated answers, creating an accelerating degradation of information quality.

The advent of widespread AI in search necessitates a fundamental shift in user behavior. These products, at their current stage of development, function primarily as prediction engines that often conflate the volume of information with its accuracy. Until significant advancements are made in AI’s ability to discern truth independently, the burden of fact-checking has undeniably shifted from the AI system to the individual user. Unfortunately, the majority of users are neither equipped nor inclined to undertake this critical task, leading to a pervasive acceptance of potentially erroneous information.

For professionals in fields such as marketing, publishing, and SEO, relying solely on AI-generated advice without rigorous human verification is perilous. The information landscape is increasingly contaminated, and the insights provided by LLMs, especially concerning sensitive topics like Google algorithm updates or content strategy, must be cross-referenced with established expert knowledge and empirical data. The integrity of digital information, the efficacy of online search, and ultimately, public trust in AI depend on a transparent acknowledgment of these limitations and a concerted effort from AI developers to prioritize verifiable accuracy over confident, yet potentially false, assertions. The era of AI misinformation demands a renewed commitment to critical thinking and human expertise.